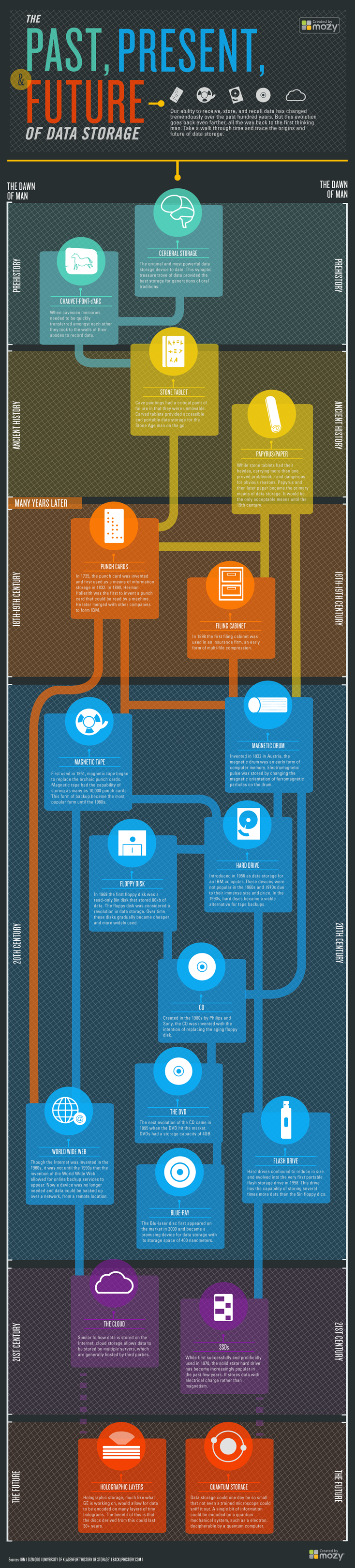

Some of the world’s largest companies today operate entirely from the cloud or at least have a major portion of their services outsourced to a cloud environment. This trend is exponentially growing – as well know it. With cloud data storage pricing at all time with downward trends, how can you resist the temptation of not using cloud based data storage services? There are, however, shortcomings to this transition, and security concerns tops the list as the most commonly cited.

Cloud storage, as the name suggests, primarily refers to the increasingly prevalent on-line storage services hosted at the cloud. There is potentially infinite storage capacity, redundancy, high availability, and stable performance offered by the cloud today. For Instance, Amazon Web Services (AWS) offers cloud storage ranging from general data storage and backup of web databases. For corporations and users alike, using cloud based technologies provide ease of access, virtually no downtime or server crashes, non-existent application accessibility issues, etc.

In lieu of many alluring advantages of cloud computing, it also brings new security challenges; in particular, reliability, integrity, and privacy of data, since no direct control is available. While data security and confidentiality can be ensured by means of encryption and tokens, integrity of data remains a blurry task.

After data is moved to the cloud, for example, you essentially relinquish ultimate control over the data, which is now entirely managed by the cloud service provider. Scary thought as it may be, it is essential for you to be able to verify that your valuable data is still available at the cloud in its original form and is ready for retrieval when necessary. How do you know if your data is not corrupted, deleted or modified or moved from one server or another at the behest of your cloud service provider?

As a thought, one possibility for assuring high availability of outsourced data is through simple replication to other Service Providers, but this adds to your costs. Another option is to periodically review your data and have a workflow in place to retrieve data for verification purposes – similar to conducting audit checks. Nevertheless, both of these options are not that appealing. To mitigate these problems, a widely utilized approach is to employ a challenge-response mechanism.

A challenge response mechanism is basically a family of protocols in which one person sets a challenge, and person on the other end must provide a valid response or answer, thus completing the challenge. The main objective of this framework is that if cloud service provider stores incomplete or incorrect data will be unable to respond to the challenges correctly, allowing you to detect anomalies.

Another robust approach should be able to support an unbounded number of audit protocol interactions to ensure that the server’s misconduct at any time will be detected. In cloud storage, support for dynamic data operations can be of vital importance to both remote storage and database services. Most of the times, while conducting integrity verification of data, you may not be able to perform integrity check yourself, or members of your team may lack the necessary expertise, in that case, setting up an audit server might just do the trick for you.

The auditing server is a reliable and independent entity that challenges the cloud service provider on behalf of the clients and assures correctness of data storage, while not learning any information contained in the stored data. For improved efficiency, the auditing server could also perform batch auditing during which it simultaneously processes auditing requests from multiple users.

By Syed Raza