Cloud Banking Insights Series focuses on big data in the financial services industry and whether it is a security threat or actually a massive opportunity. How does big data fit into an overall cloud strategy? Most FI’s have a positive mind-set towards cloud IT consumption as it not only enables saving across IT investment but frees up precious resource and surplus so FI’s can invest in customer centric activity. Financial institutions have long, and in many occasions life long relationships with their customers, the convergence of cloud and data analytics helps FI’s to work towards the common goal of relationship building and increasing product uptake through tailoring and appropriately segmenting their approach. There is a great deal of “noise” around this topic both in terms of its real meaning but also how it can create disruption in relation to security & privacy. Throughout this article we will share some insight about both of those to help reduce the “noise levels” from the industry.

Big data has been around for a number of years now and everyone has an opinion on it. It is not unusual to see two individuals having a conversation about big data where neither person is talking about the same thing. One is saying that it is all about the volume and the other saying it is about variety. Some even suggest that small and fast data shouldn’t be classified under big data. This is just like two individuals discussing Ruby and Java. Both of them are programming languages but neither has anything to do with the other – only when you apply some context to the conversation does it become productive. So let us specify some context on the topic and then see how it applies to financial services.

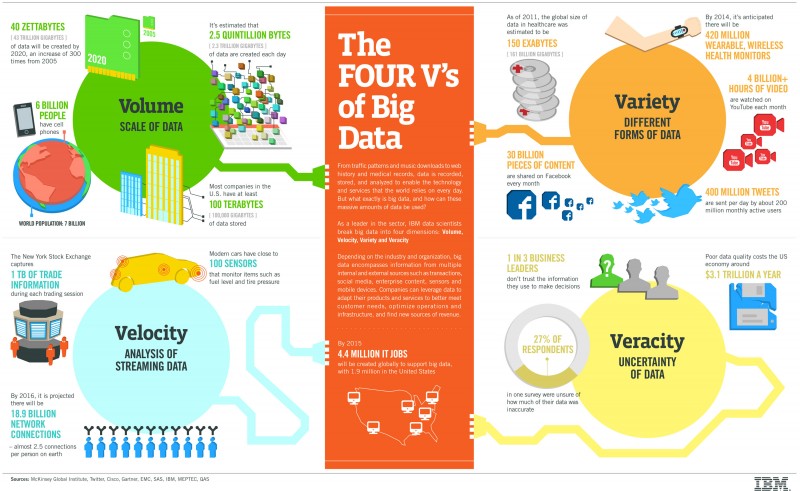

Big data is really all about 4 things, known as the 4 V’s of Big Data:

(Image Source: IBM)

Now that we understand the 4 V’s you might be asking why this is important and what generated these massive amounts of data? This is a great question and the answer is quite simple.

There has been an increase in data of around 10x every five years and about 85% of new data types have been introduced; from clickstream, time-series and columns to spatial data types. The potential is skyhigh when these types of data explosion are placed in conjunction with the consumerization of IT or the huge amount of people connected through social channels.

The potential is huge but the “noise” levels right now are so high that it is difficult to achieve real value. This happens because, as is the case with many other innovations, companies already have some kind of Big Data solution which in their opinion will revolutionize the way they work, but when you dig a bit deeper do they really know how to capitalise on this? Other misconceptions are around the thinking that Big Data is all about Hadoop and can achieve if you leverage it. This is definitely not the case. Big Data is an approach, a strategy to generate better Business Insights and Intelligence for the business. The technologies and products we use will always depend on what the enterprise’s goals really are. If we are talking about storing the data (Yes Big Data can also be about how you store this data) we might focus on MongoDB, Cassandra, RavenDB and others, but if we’re talking about the processing we might be talking about Hadoop, Spark, Kinesis, Streaming Analytics and others. Every choice depends on the context and the Hadoop ecosystem is not the only one which will solve everything. There is no such thing as “one size fits all” or “silver bullets” as this type of thinking will result in disastrous consequences.

When looking at the market, It would be fantastic to see a common and unified approach to products that enable Big Data analytics, but unfortunately we are still far from that. This is very similar to what is happening with the Cloud. Just because you have it doesn’t make you an expert in it and by no means does this guarantee that you are optimising its potential, let’s not delude ourselves, we are all still trying to figure out the real power of this for our businesses and it will depend on how we leverage it. To succeed in this transformation we will first need to be humble enough to stop and learn without preconceptions and understand that this is really another tool in our toolbelt to help our business succeed. Basically we need to learn that, most importantly, we need to work to get the right data, at the right time to the right person. Only then we will make this successful.

To better reduce the noise levels, which are associated with anything new, we need to start using some level-setting questions. These are some which should help you become very successful achieving your commercial and customer engagement objectives. The questions are:

After answering these questions you should have a clear notion of how much “noise” you have been dealing with and why sometimes you have the sense that you are having conversations around big data with others but it seems that you both are speaking different languages. In addition, this context should enable a real productive discussion because everyone should be singing from the same hymn sheet.

Now that we understand the context we also need to understand how the Big Data approach works and why. This approach is based in 4 phases:

Financial Services has everything to benefit from Big Data when the strategy in place is accurate. If we consider the retail banking companies, this will be a huge help since with more data available it will be much easier to define what type of products will be more suitable to a specific customer because when all the social data is merged with the internal financial data of the customer, this forms an near accurate picture around the customer’s preferences, likelihood of investment, personality and most importantly any associated risk. Application in Big Data for the financial services industry are just touching the surface, a lot more will appear in the meantime i.e the ability for FI’s to create a massive database with social and professional information from customers where they can create risk analysis near real-time to understand the insurance risk you will need to pay and so on.

Fraud Detection Example

Let’s use an example to check if what we use today to perform our Fraud Detection in Credit Cards is basically a Big Data problem or not.

Let us start with the setting the context of what Big Data is in this example.

So here is a more visual representation of what we were talking about. Now we see the real complexity and how setting the context is so important. By now you should have a fairly good idea about the context of this example, how Big Data can help and how to structure your conversation around it.

So in summary Big Data is an over hyped buzz-word which by itself doesn’t mean anything. We need to dig much deeper to understand really what it is all about and always remember to:

We hope this article helps you achieve your goals and better understand how Big Data can change Financial Services because with all the data we have it is only a matter of getting the right people (Data Scientists, Data Stewards, Data Engineers) working on it and we will be much better fulfilling our customer needs.

By Diaz Ayub