Executives embarking on the journey to becoming a digital enterprise are essentially asking IT to enable the enterprise to create new services and products and bring them to market faster. Cloud infrastructure services have been key to allowing IT to better scale services, the weak link in terms of performance and security is the network. Direct interconnection to cloud services has helped to solve some of these issues, but enterprises will eventually find that the network itself will be the pain point in transformation efforts unless new approaches to network architecture are considered.

In the past, the standard means of connecting to the cloud was through the public internet. This was sufficiently flexible, in as much as developers could access resources from different IaaS vendors, with Amazon Web Services and Microsoft Azure being the two more commonly used cloud Service Providers (CSP). When a CSP has had outages in a region (e.g., Amazon’s outage in February 2017), the outages have tended to highlight shortcomings with application deployment strategies. In this case, Amazon’s service status dashboard was unavailable, along with services from numerous other web firms. Including the use of multiple regions for application failover in a deployment strategy is certainly an important step to take. However, in most cases, the application is still dependent on the CSP’s network to move data between regions. And apart from that, the enterprise network itself can still be a big unaddressed issue in terms of performance and availability.

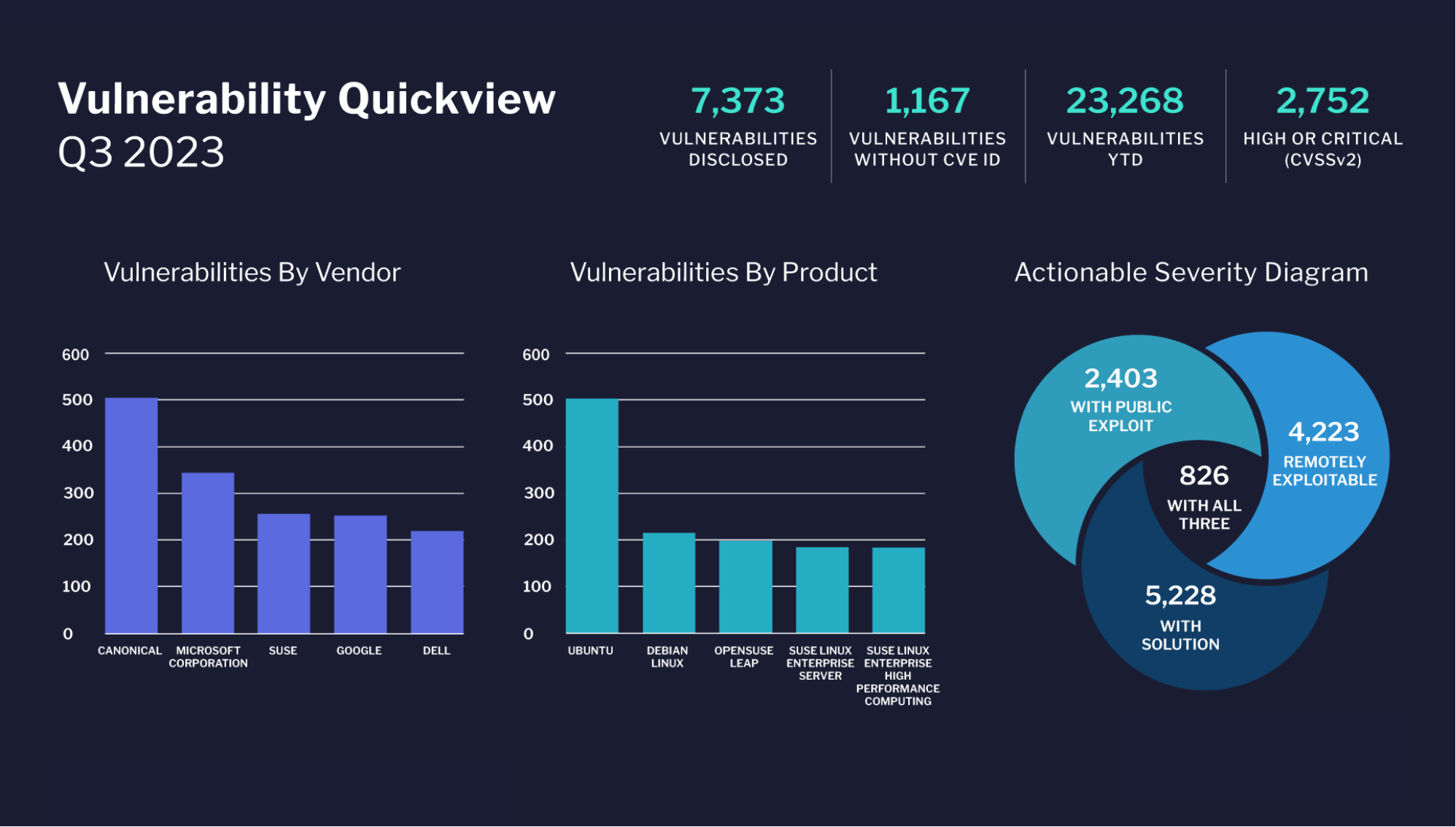

On the left side of the diagram below (Figure A), the enterprise has provisioned connections between branch offices and headquarters using MPLS links. Ingress and egress traffic to the internet, cloud providers and SaaS providers goes through links maintained at the headquarters.

Figure A: Diagram of remote/branch to enterprise to cloud

The diagram to the right provides an illustration of how applications are located in a variety of datacenters around the globe — and shows employees, partners, and customers, who are accessing services via mobile devices. It shouldn’t be hard to see that hair pinning traffic through the enterprise datacenter is sub-optimal from a performance standpoint. Here’s why:

Adding bandwidth doesn’t solve the performance problem by itself. Think of it as a mass transit system: engineers can add more cars to carry more passengers, but the distance the train travels hasn’t changed, and there’s a possibility that more adding more trains will result in congestion at a station along the route (which is analogous to packet congestion at network peering points).

There is momentum building for the use of interconnection services for accessing the cloud. Equinix, a carrier-neutral multi-tenant datacenter (MTDC) provider, has built an industry-wide model for interconnection bandwidth consumption called the Global Interconnection Index (it’s fashioned after Cisco’s well regarded Visual Networking Index). The model forecasts that interconnection bandwidth between enterprises and cloud/IT service providers will grow at a CAGR of 160% through 2020 to an aggregated bandwidth capacity of 547 Tbps.

What does this mean for enterprises today? One of the solutions offered to address the performance issue with WANs and cloud is to move traffic off the public internet and onto direct, private connections. These services entail buying connectivity from a network service provider that is a partner to one of the cloud providers. The NSP has already connected with the cloud provider at a MTDC, hence the “direct connect” terminology for these services. These services are a form of interconnection, which in its most basic form is defined as that the exchange of traffic between two parties via distributed IT gear (routers and such). Among the cloud providers with offerings:

While on the surface it would seem simple enough to choose a provider to connect the enterprise to the cloud provider, there are different technology and pricing approaches used by each CSP that might impact whether a direct link service is useful or manageable in the long run.

Table 1: CSPs and interconnection services

|

Cloud |

Service Type |

Port Fees |

Egress Fees |

|

Amazon |

VLAN |

Y, hourly |

Y |

|

Microsoft |

BGP |

Y, monthly |

Y 1 |

|

|

BGP |

Y, monthly |

Y 2 |

Both Microsoft and Google have options that allow for traffic to traverse their respective private networks, and egress the network in different regions. Google has previously offered the ability to directly peer for use of public cloud services, but has also recently offered “direct interconnection” as a service useful for those with hybrid on- and off-premise private cloud environments who wish to manage private IP addresses under RFC 1918 across both the corporate datacenter network and GCP instances without requiring a NAT device or VPN tunnel.

(1) Note that some datacenter providers have private networks between facilities on a given campus, metropolitan area, or even different cities within a country, and might reasonably be considered a competitor to a traditional telecoms or fiber optic network vendor.

Every rose has its thorn, right? With ‘direct connect’-type services, there can be a performance and security advantage over sending traffic over the public internet. And certainly, many enterprises at the early stages of cloud adoption will find them to be perfectly adequate for their needs. However, looking beyond just the consumption of IaaS, how will an enterprise solve performance and security issues for SaaS applications like Salesforce or office 365? Next, consider that surveys from vendors such as RightScale have found that cloud users are running applications in an average of 1.8 public clouds and 2.3 private clouds; including the use of third-party, SaaS applications, the number of cloud vendors being used is typically between four to eight.

It should soon be apparent then that a one-to-one connection with each cloud vendor is hard to scale from a cost or operational perspective. Typical WAN topologies & architectures are rigid and static-if a business is already global and set on expanding further into Europe or Asia, what is the cost and time spent setting up links to all of my cloud providers, and how will I manage contracts with multiple NSPs in those regions? From an operational perspective, enterprises should also ask:

A brief look at direct interconnection services shows that there are performance and security benefits for enterprises — if they have a minimal number of cloud vendors and a static user base. But as discussed, this isn’t likely to be the case for many companies, especially those with a mobile workforce and a global presence.

For those companies, new approaches to network architecture need to be considered. In our next article, we’ll talk about SD-WAN being a key to creating services that allow for a distributed network architecture-an approach that will address these challenges. In our next article, we will examine in more detail what this architecture looks like, what additional elements should be added, and what considerations should be made in build versus buy decisions.

By Mark Casey, CEO, Apcela