The hype around IoT may have been surpassed this year by breathless coverage of topics such as artificial intelligence and cryptocurrencies, but there continue to be interesting developments in IoT related technology and services. The possibilities for efficiency gains and the creation of entirely new revenue streams in the airline, oil and gas, transportation and other industries are tremendous. Doing so, however, will require adjusting how enterprises process data, and how they build the underlying network infrastructure that links IoT devices and compute resources together.

A recent survey of IT decision makers by open source software firm RedHat revealed that investment and implementations through November 2018. There’s an increase in both number of companies implementing IoT projects on a year-over-year basis (from 53 to 73 percent) along with an expected increase in project budgets.

How much budget, and whether those projects make a lasting impact are another matter, but regardless, the momentum for IoT projects is building. That’s turning into investment and development activity from a wide array of vendors, including companies like GE Digital. GE Digital offers software for building and maintaining industrial IoT systems. The company announced Predix Edge, an extension of its analytics platform, which enables microservices-based analytics functions to run on devices with limited compute and network resources, as well as offering device management and analytics capabilities to run on local virtualized datacenter infrastructure (Predix has previously been a cloud-only service).

The application of IoT in industrial settings means that being created at the “edge” of the network. Sensors in a factory, or along a pipeline, might be taking measurements that are critical to the production process at different stages. Getting that data processed so that a system can alert and respond to abnormal readings is crucial. There can be large amounts of data generated, making delivery of data back to a central cloud expensive and impractical, in this scenario.

Where should data be stored? Should all of the data be stored, even? While current research indicates that most companies are storing IoT data in centralized datacenters now, that’s set to change as processing requirements become clearer. Currently, only around 10 percent of enterprise-generated data is created and processed outside a traditional centralized data center or cloud, but by 2022, Gartner predicts this figure will reach 50 percent.

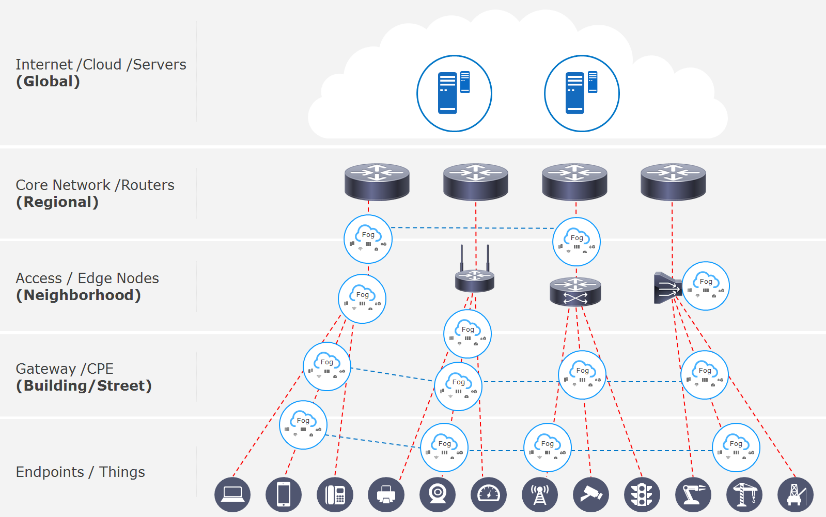

The reality of industrial IoT systems is that data will reside in multiple locations; to address this issue, vendors have been working together through the OpenFog Consortium, to define a framework for managing compute, storage and network resources involved what’s referred to as a “fog computing” paradigm. Fog computing is about moving cloud computing closer to end users and devices. This means cloud capabilities (on-demand, scalable, virtualized resources) can reside at different layers of the network (see diagram A).

Diagram A: IoT ‘Fog’ architecture and communications pathways

Source: OpenFog Consortium

Use cases will dictate the requirements for edge processing services. Variables include:

The capabilities of the network are a common factor in each of the above variables. The OpenFog reference architecture shows a hierarchical (i.e., north-south) networking between core cloud resources and the different layers of nodes, but also between nodes (east-west).

If we think of an enterprise with several factories in different regions, each factory would be networked with a main cloud resource (perhaps a private cloud at the headquarters, or at a third-party provider), and networked with each other. The production systems might also be linked in with an analytics system from an equipment vendor for predictive maintenance capabilities. A developing issue with a component in Factory 1 (for example) causes an alert to be sent to the vendor, whose systems recognizes that the part has aged and is likely to fail soon. Not only is the Factory 2 maintenance team alerted to the situation, but other factories where the part is used will be alerted as well-before failure has occurred, saving valuable downtime. Depending on the implementation, some analytics capabilities could also be placed in the factory, enabling “factory-to-factory” communication and coordination; an example here might be in the case of alerting another factory to increase production of an item due to an influx of orders.

In much the same way that enterprise use of hybrid cloud necessitates a rethinking of the enterprise WAN architecture, industrial IoT applications will benefit from SD-WAN as a network overlay. In terms of network access, IoT systems will use a variety of interfaces, including cellular and WiFi, and nodes are inter-networked with each other. SD-WAN services can offer the performance and resiliency needed for industrial IoT systems in a cost-effective manner.

The application of SD-WAN for industrial IoT will enable other key benefits. There shouldn’t be a focus just on whether or not an enterprise adopts a fully multi-tiered architecture of fog nodes, as laid out by the OpenFog Consortium. The idea is that there will be varying levels of compute and storage resources required depending on your particular use case, and those needs might be well served by a single tier architecture initially but might require more over time. The model illustrates what is happening overall with enterprise IT – there’s a (gradual) swing back from a highly centralized cloud computing to a de-centralized model.

In this model, forward-looking enterprises are:

The resulting compute and network architecture puts resources closer to end users for better performance.

By leveraging SD-WAN, enterprises have an opportunity to re-examine their network and application architecture and build a more agile, performance infrastructure that can fulfill the requirements of IoT projects.

By Mark Casey