Every day, people pop their health-related questions into Google’s search field. Symptoms-related searches alone make up about 1 percent of queries in Google. If you think about it, given the number of things people could search for, that’s quite a lot. The frequency of such searches prompted Google to introduce a direct-answers feature for mobile in 2016.

2016 was also the year Google incorporated the artificial intelligence program RankBrain into its core algorithm. So, while the medical world lags behind in using AI to help diagnose illnesses and treat patients, Dr. Google makes pronouncements each time someone picks up their smartphone and asks about symptoms. Google is working with Harvard Medical School and the Mayo Clinic to make this happen.

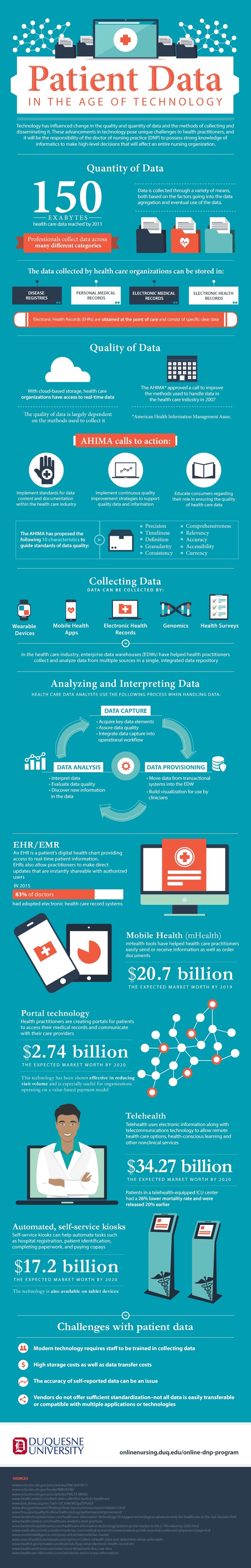

But Google doesn’t face the layers of structural complexity the medical industry faces. Even by 2011—a year that already seems buried in the distant past—there were 150 exabytes of patient data piled up in multiple repositories. This infographic from Duquesne University illustrates how patient data is being created and used:

Notice there’s no mention of AI. There are automated self-service kiosks. There are enterprise data warehouses (EDWs), which are vast repositories of healthcare data that require advanced analytics to sift through them. And at least 83 percent of doctors are using electronic health records (EHRs) to store patient data on the cloud, where medical personnel who have authorization can access information as it’s updated in real time. Clearly, there’s an infrastructure in place with which artificial intelligence could work.

But that infrastructure is flawed. A McKinsey report points out that healthcare information is fragmented and diffuse, housed in separate silos throughout the industry. According to McKinsey’s group of researchers, “Merging this information into large, integrated databases, which is required to empower AI to develop the deep understanding of diseases and their cures, is difficult.”

Along with the education and travel industries, the healthcare industry is on McKinsey’s list of the slowest AI adopters. Perhaps that’s because, financially speaking, healthcare doesn’t need much of a boost. In Nashville, Tennessee, the $78 billion healthcare industry is the state’s largest and fastest growing employer. One out of every 11 jobs in Nashville will be in healthcare by 2022. This drives increasing demand for real estate as growing businesses search for space and employees buy houses. It doesn’t make for-profit hospitals search for AI assistants (which could potentially displace workers). Yet, McKinsey estimates AI could save the industry $300 billion per year and would increase productivity for registered nurses by as much as 50 percent.

But would it help doctors and patients? Researchers from UCLA think so. They created a Virtual Interventional Radiologist (VIR) that uses the same level of deep learning the AI in self-driving cars uses to identify objects. The VIR is a chatbot specifically for physicians.

“By implementing deep learning using the IBM Watson cognitive technology and Natural Language Processing, we are able to make our ‘virtual interventional radiologist’ smart enough to understand questions from physicians and respond in a smart, useful way,” said Dr. Kevin Seals, who programmed the VIR. Physicians can simply text a question to the application and it will respond with evidenced-based information in the form of a chart, text message, or a redirect to a subprogram.

While doctors would most likely be friendly to receiving evidence-based information in this manner, it remains to be seen whether consumers would want to listen to a diagnosis or recommendation from AI. “How much patients would trust AI tools and be willing to believe an AI diagnosis or follow an AI treatment plan remains unresolved,” says McKinsey.

If there’s any indication from Google’s direct-answers feature for queries about symptoms, consumers seem ready to receive health information from AI. However, we perceive medically-approved diagnoses in a different light than Google results. There will have to be a united stamp of approval from the medical community when it comes to AI in the doctor’s office. Until then, medical AI will remain in the murky realm of good ideas yet to come to fruition.

By Daniel Matthews