Concepts of Platform as a Service (PaaS) originate from shared IT services (Shadow IT model), wherein multiple tenants run applications on shared systems (local data center, under your desk…. etc.).

Over the recent years, Enterprise IT was understandably reluctant to provision business-critical applications on the cloud. Lately, I found Enterprise community retracing its conversations to unlock the larger potential of PaaS platforms and augment their existing investments in SaaS applications. With the advancement of containerized, multi-stack PaaS software, it’s worth looking at how private/hybrid PaaS can enable new ways of deploying and hosting applications within the enterprise.

Before IaaS, the IT community hosted our business applications on bare metals. We would have regional data centers, full of racks, servers (dedicated OS), web servers, dedicated database servers, Routers and everything in between.

Concepts of Virtualization has evolved from IBM Mainframes tracing back to the 1960’s. Instead of running individual physical servers for each application, we spun out VMs for specific applications. Although each VMs would need to be provisioned in advance, it added the much-desired benefit of remote management and segregation of resources, while avoiding the pains associated with managing individual bare metals for each application. This provided Enterprises a way to share or spread its IT investments across multiple business applications.

As we migrated to the Cloud, this notion of virtualization has been carried forward by the vendors. NIST requires Cloud Computing Vendors/Products (NIST’S Definition of Cloud) to have the below essential characteristics:

Note that ‘Virtualization’ is not listed as a required characteristic listed. Although many would detest this definition, we all concede that without some form of Virtualization, these key characteristics could not be delivered.

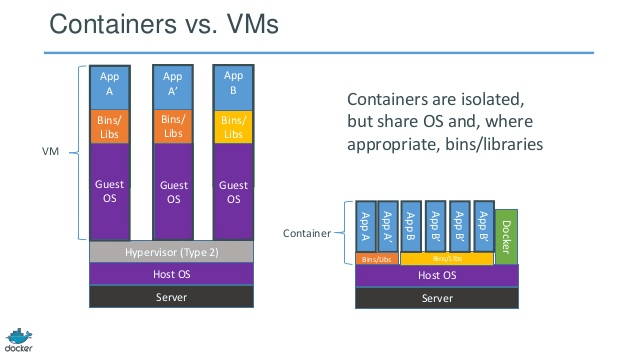

Virtualization allows us to run one or multiple Operating Systems (guest OS’s) on top of an Host OS, while allowing hardware resources to be shared across all the guest OS’s.

P.S. – We will NOT be discussing Bare-metal Hypervisors in this post.

This often creates the misnomer that Virtualization (Hypervisor technologies) will continue to drive Paas (Cloud) adoption.

One significant limitation of Virtualization is that VMs remain tightly coupled to the underlying OS as well as to the application it hosts. This created a performance roadblock of Scaling Out these applications (as opposed to Scaling Up).

We can argue about cloning entire VMs to spin out multiple instances (e.g. VMIs on AWS or VMs on Azure). However, there are significant CPU and Memory overheads which make VMs slow to deploy and boot. Not to mention the need to boot up the Guest OS’s.

Lastly, the licensing costs associated with OS licenses for each VM (E.g. Windows). This significantly limits Dev Ops agility, which is a key benefit of adopting Platform-As-A-Service (PaaS). The need for Continuous Delivery (CD) and Continuous Deployment (CD) inherently depends on our ability to efficiently provision instances (Dev, QA, Test, UAT etc…) as needed and automating the release processes.

Containerization is an ability to virtualize the OS resources instead of the underlying hardware while it sits directly on top of the bare metal. Thereby allowing to eliminate the need for multiple OS’s for each Container.

Although, this has been a natural evolution from the roadblocks of Virtualization. But, one can trace the concepts of containerization to early 2000’s from FreeBSD Jails and eventually from Oracle Solaris’s Zones.

Simplicity always encapsulates the complex world beneath. (Image Source: Docker Inc.)

It allowed to install a Container engine which hosted Containers to share the underlying OS. Linux Containers (LXC) predominantly has gained popularity since Docker challenged the Virtualization market. With LXC, individual applications can run within their own container (dedicated file system, storage, CPU & Memory) while sharing a common Linux kernel. Unlike VMs, there is no abstraction to the hardware.

At the time of writing this post, Windows Containers have been released by Microsoft (Hyper-V Containers). Hyper-V Containers take a slightly different approach to Containerization for stricter isolation. We can review that in a future post.

While Security around Containers has been a concern in mass adoption within Enterprises , it is being addressed aggressively. Since a common Kernel is being shared across Containers, the primary concern being addressed is in further reducing the security/attack surface area in this architecture. We will discuss security Vulnerabilities further in future posts.

Major PaaS providers, like Azure, AWS, Salesforce and others solve many of the problems we faced with environment provisioning, expensive or non-existent fail-over options for web applications and networks, costly management, barriers to continuous delivery (CI/CD/CD), and inherent technical debt. While none presents the single bullet, these companies are leading the innovation to True PaaS.

For optimal leverage, these technologies also mandate us to redefine (read rewrite) our applications to follow the principles of micro-services architecture, which has its roots in Service Oriented Architecture (SOA).

Let’s toast to 2016 and our quest for Continuous Evolution (CE?)

By Paul Sourin