New tools and technologies help companies in their drive to improve performance, cut costs and grow their businesses but as companies adopt cloud services in greater numbers and refine internal processes for development and operations, security considerations must be front and center.

As companies rapidly adopt Cloud with a DevOps approach to rapid response to business they must revisit security plans to confirm they are still effective in preventing and handling cyberattacks, making adjustments where needed. In certain industry segments this situation becomes more acute with Internet of Things (IoT) due to the nature of how these are operated and traditionally secured. To succeed at this, companies need to create the right environment for a cybersecurity culture and utilize automation technologies to protect and preserve data, operations and applications.

Cyberattacks are increasing in number and sophistication. For many security professionals and heads of business it is no longer a case of if something will happen, but when. In fact, according to Alert Logic and Crowd Research Partners, over half of cybersecurity professionals expect there to be successful cyberattacks on their organization in the next year.

Consequently, a third intend to increase security spend on cloud infrastructure and over a quarter on cloud applications. With 40 percent citing a lack of security awareness among employees as an obstacle to stronger cybersecurity, it’s little wonder that 23 percent plan to up their spend on training and education.

Cyberattack prevention will continue to be a two-pronged approach – top-down and bottom-up. Top-down, compliance mechanisms must be implemented, including rigorous security level classification of data and applications and governance to secure certifications. Bottom-up, appropriate tools and technologies for intrusion detection must be in place.

Internal processes are changing. Cloud services and DevOps are converging to bring about the rapid release of value to the business. Previously, companies sourced storage and information-sharing infrastructure and had to add software and applications. Now, services come loaded with pre-built components and applications such as database solutions.

This is convenient, often cost-effective and efficient but what does it mean for security? Companies have to rethink InfoSec, questioning whether the mechanics of yesteryear are still relevant or if they need to be refined.

As companies make their adjustments we can expect to see an increased focus on building ‘zero trust’ systems with more segmentation within the model and access security even within the network perimeter. In addition, the zero trust way of thinking will be added to Secure Software Development Lifecycle (SSDLC).

When the correct application of security protocols is left to individual users, the security of business data and applications depends on staff knowledge and training being up to date. Checks and balances, for example around taking appropriate action according to a data set’s security classification are largely people dependent and this is a potential weak spot for all organizations.

Wherever dependencies such as these exist, assumptions should never be made. This goes for the responsibilities of cloud service provision as much as for internal training practices. All too often assumptions are made over security when contracting for cloud services and this is contrary to InfoSec due diligence.

With the number and regularity of high profile data breaches we see, it would perhaps be forgivable to think that companies simply cannot prevent the most persistent of hackers from getting in. That they should instead focus efforts on containing intrusions, so that they can’t progress beyond the entry point to access, copy, destroy or otherwise compromise data.

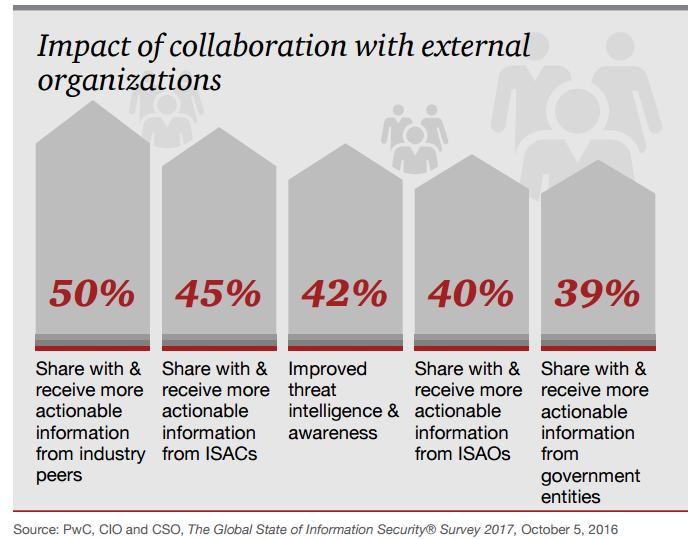

In this, there is some comfort that detection intelligence is improving. According to PwC, 42 percent of those that detected a security incident in 2008 didn’t know the source of it; this has now fallen to below ten percent.

Effective handling of a cyberattack depends on effective planning. This means having in place a method for quickly identifying that an attack has occurred and a plan that can be swiftly put into action to isolate the issue and prevent further spread.

With the risk of cyberattacks being so high, there can be no excuse at all for not having thorough, and tested, disaster recovery and business continuity management plans. These must include a strategy for crisis communications to minimize reputational and brand damage.

Technologies that constantly scan for network Vulnerabilities support swift action in the event of data or infrastructure compromise. Understanding what is needed, and the optimal level of investment it will take to protect valuable assets comes down to knowing the system’s architecture and thoroughly assessing risk levels.

Automation of as much InfoSec as possible makes detection, system shut-down and plan instigation more rapid and effective. With attacks increasing, and becoming more sophisticated, organizations need to invest in their disaster recovery and threat intelligence systems.

Evolution in how businesses deliver their services externally keeps raising the bar on cyberattack mitigation. The IoT, which is steadily creeping into many areas of our lives, is a case in point. This is a growth area, with the number of connected homes in the US experiencing a 31 percent compound annual growth rate according to McKinsey, and 29 million connected homes forecast in 2017.

The depth of connectivity we are now becoming used to introduces security considerations into areas where they haven’t existed before, including utilities provision and the operation and maintenance of private vehicles.

As companies take advantage of technology and process advances to change the way they design, deliver and operate, and as they incorporate connectivity into more services delivered to customers, they must be extra vigilant over cybersecurity.

To prevent cyberattacks and handle them should they occur, they must plan well, understand their system’s architecture and take advantage of the tools and technologies that support damage limitation. Companies that fail to do this may well fail to protect their brand and reputation and consequently their short-term performance levels and long-term future.

By Vidyadhar Phalke