The business imperative to drive better decisions and outcomes using data is both critical and urgent. There is no mistaking that we have entered an era of data-driven transformation that was not yet upon us during the early phases of cloud adoption and is, at the same time, being inspired by cloud providers and third-parties offering cloud-based data services that enable data-driven transformation initiatives. According to TechTarget’s Enterprise Strategy Group research survey, The State of DataOps, July 2023, inconsistencies in data across different systems and sources are the top challenge for data users. In the context of the era of data-driven transformation, there is a need to reframe how we think about cloud vendor lock-in so that IT organizations can focus their efforts on the most pressing problems of today rather than tilting at problems associated with an antiquated concept of lock-in.

The pain associated with cloud vendor lock-in hasn’t always been clear. In the early days of the cloud, it was mostly associated with “application portability” and was largely theoretical. Yes, it is a good principle to not rely on a single vendor for any IT service. But with a clear category leader in AWS and relative homogeneity between services offered by cloud providers, the actual pain of moving an workload to a single vendor was limited to “maybe I could get that service for cheaper from someone else” and “the application will be hard to move”. When cloud service are homogenous, those pain points are neither critical nor urgent to solve.

Fast forward to today and the services offered by public cloud providers are no longer homogenous. The emergence of differentiation and specialization in areas like cloud compute resources and native and third-party data services services is no accident. We’ve entered an era of data-driven transformation where businesses are competing on the basis of their ability to draw insight and make better business decisions from data. Cloud vendors are innovating rapidly in order to serve data-driven transformation needs.

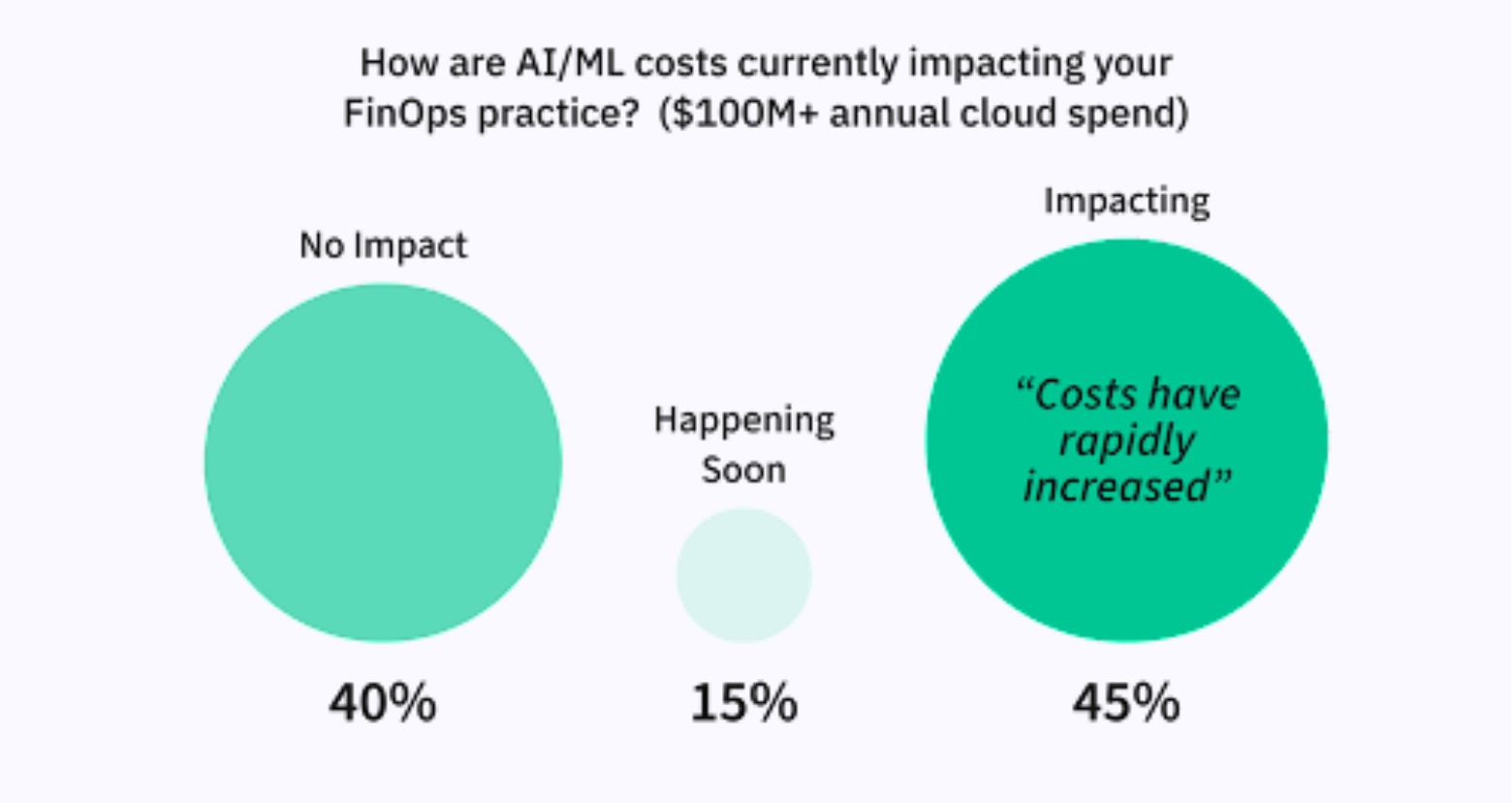

In the area of compute resources, the diversity of CPUs and GPUs available and workload specialization are driving the opportunity to make more fine-grained trade-offs between price and performance. In the area of “value-added” data services, innovation in artificial intelligence, machine learning, business intelligence and other services is increasingly focused on serving customers with particular data types, vertical market and analytics needs.

Whether by happenstance or intention, data-driven businesses will either be (or are already) using multiple clouds to run applications and for value-added data services. According to a Global survey from Vanson Bourne and VMware, Nearly 1 in 5 organizations is realizing the business value of multi-cloud, yet almost 70% currently struggle with multi-cloud complexity. At the same time a plurality of organizations (95%) agree that multi-cloud architectures are now critical to business success and 52% believe that organizations that do not adopt a mult-cloud approach risk failure. Herein lies the main impediment to data driven transformation in a multi-cloud World:

Problem Statement: In the age of data transformation, how does an IT organization make data available to applications and services chosen by internal data consumers and external partners based on each of their unique business and technical requirements by in multiple public clouds while simultaneously managing costs?

This problem statement reflects that, in the era of data transformation, we’re contending with a special type of lock-in that has more to do with data accessibility than with application portability.

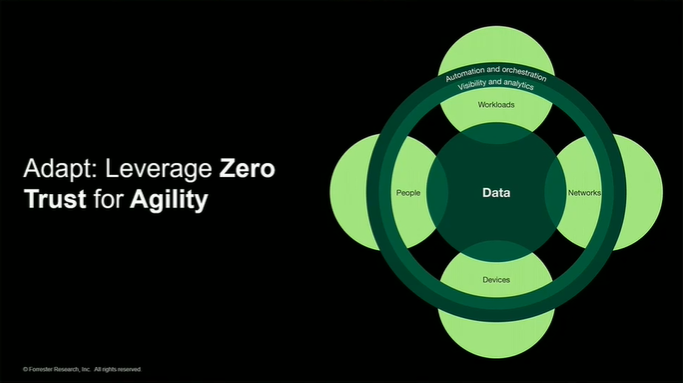

In the era of data transformation, lock-in isn’t, first and foremost, about application portability. Rather this is a data-level lock-in issue synonymous with the term “data gravity”, the phenomenon where the more data an organization collects, the more difficult it becomes to move that data to a new location or system. In the context of the cloud, as data accumulates in a cloud, it attracts more applications, services, and users to the same cloud. This self-reinforcing “gravitational pull” makes it increasingly challenging to make data available to applications and services in other clouds. As a result, organizations suffering from data gravity will find themselves locked into a particular technology or vendor, limiting their flexibility and agility.

Contending with the data gravity version of lock-in requires a fundamental shift in mindset among IT organizations from an “application-first” view of the public-cloud to a “data-first” view. No measure of application portability can accelerate data-driven transformation if an organization cannot first make its data readily accessible to the applications, and native and third-party data services its internal data consumers and external partners are using in the cloud.

By Derek Pilling